Why You Need This Cheat Sheet

When you are writing code and trying to figure out if your solution is good enough, you need a quick mental ranking of complexities. Is O(n log n) good? Is O(n²) acceptable? What even is O(n!)?

This post is the reference I wish I had when I started learning about algorithms. Bookmark it. Come back to it. Let it sink in over time.

The Ranking: Best to Worst

Here they are, from fastest to slowest. Memorize this order — it is the foundation of all algorithm analysis:

O(1) → O(log n) → O(n) → O(n log n) → O(n²) → O(n³) → O(2ⁿ) → O(n!)

Everything up to O(n log n) is generally considered efficient. O(n²) is acceptable for small inputs (n < 1,000). Anything worse than O(n²) usually means you need a better algorithm.

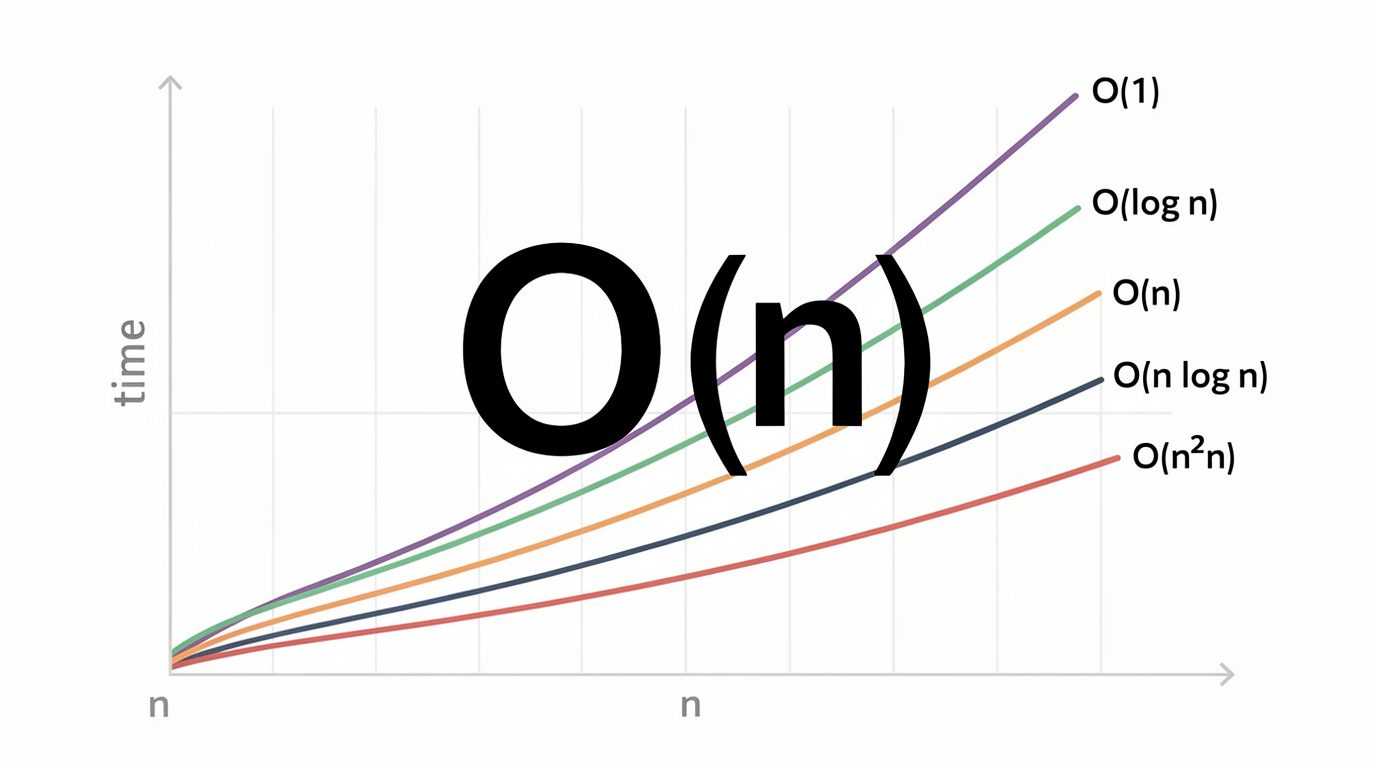

The Visual Comparison

The interactive bar chart below shows you exactly how these complexities compare at different input sizes. Step through each one and pay attention to how the bars change as n grows. It makes the differences visceral:

<iframe src="/en/dsa/embed.html?topic=2&lang=en" width="100%" height="200" style="border:none;border-radius:12px;overflow:hidden" loading="lazy"></iframe>

What Each Complexity Feels Like

Let me give you an intuition for each one that goes beyond the math:

| Complexity | Nickname | What It Feels Like | Real Example | |---|---|---|---| | O(1) | Constant | Instant. Like flipping a light switch. | Array index, hash lookup | | O(log n) | Logarithmic | Looking up a word in a dictionary — flip to middle, pick a half, repeat. | Binary search | | O(n) | Linear | Reading a book page by page. Touch everything once. | Finding max, linear search | | O(n log n) | Linearithmic | Sorting a deck of cards efficiently. | Merge sort, quick sort | | O(n²) | Quadratic | Comparing every person in a room with every other person. | Bubble sort, nested loops | | O(n³) | Cubic | Three nested loops. Rarely acceptable. | Naive matrix multiplication | | O(2ⁿ) | Exponential | Trying every combination of on/off switches. | Power set, brute force | | O(n!) | Factorial | Trying every possible arrangement. Insane growth. | Permutations, traveling salesman |

The Practical Input Size Guide

Here is something incredibly useful that most tutorials skip — what input sizes can each complexity handle in roughly 1 second (assuming ~100 million operations per second):

| Complexity | Max n (~1 sec) | Real-World Meaning | |---|---|---| | O(1) | Any | No limits | | O(log n) | Any | No practical limits | | O(n) | ~100,000,000 | Process 100M items in 1 sec | | O(n log n) | ~5,000,000 | Sort 5M items in 1 sec | | O(n²) | ~10,000 | Only small datasets | | O(n³) | ~500 | Very small datasets | | O(2ⁿ) | ~25 | Tiny inputs only | | O(n!) | ~12 | Almost nothing |

Use this table when choosing your approach. If your input could be 1 million items, you need O(n log n) or better. If it is always under 100 items, even O(n²) is fine.

Common Operations Quick Reference

Arrays / Lists

| Operation | Time | Notes | |---|---|---| | Access by index | O(1) | Direct memory access | | Search (unsorted) | O(n) | Must check each element | | Search (sorted) | O(log n) | Binary search | | Insert at end | O(1)* | Amortized for dynamic arrays | | Insert at beginning | O(n) | Must shift all elements | | Sort | O(n log n) | Best comparison-based |

Hash Maps / Sets

| Operation | Time | Notes | |---|---|---| | Insert | O(1)* | Amortized average | | Lookup | O(1)* | Amortized average | | Delete | O(1)* | Amortized average | | Iterate all | O(n) | Touch every element |

Trees (Balanced BST)

| Operation | Time | Notes | |---|---|---| | Search | O(log n) | Halves search space | | Insert | O(log n) | Find position + insert | | Delete | O(log n) | Find + restructure | | Traverse all | O(n) | Visit every node |

Sorting Algorithms

| Algorithm | Best | Average | Worst | Space | |---|---|---|---|---| | Quick Sort | O(n log n) | O(n log n) | O(n²) | O(log n) | | Merge Sort | O(n log n) | O(n log n) | O(n log n) | O(n) | | Heap Sort | O(n log n) | O(n log n) | O(n log n) | O(1) | | Bubble Sort | O(n) | O(n²) | O(n²) | O(1) | | Insertion Sort | O(n) | O(n²) | O(n²) | O(1) |

The Mental Models That Help

When you are trying to figure out the complexity of code you are reading or writing, these mental shortcuts help:

- O(1): "I can answer instantly, no matter how much data there is."

- O(log n): "I cut my problem in half with every step."

- O(n): "I look at each item exactly once."

- O(n log n): "I look at everything, and for each thing I do a halving operation."

- O(n²): "I compare everything with everything else."

- O(2ⁿ): "For each element, I have 2 choices, and I try all combinations."

- Two Sum — O(n) with hash map vs O(n²) brute force

- Merge Intervals — O(n log n) sort + O(n) merge

- 3Sum — O(n²) with sorting + two pointers

- Longest Substring Without Repeating Characters — O(n) sliding window

- Kth Largest Element — O(n) quickselect vs O(n log n) sort

- Word Search — O(n × m × 4^L) backtracking — exponential!

When in doubt, count the loops: 0 loops = O(1), 1 loop = O(n), 2 nested loops = O(n²).

Practice Problems

These are great for building intuition across all complexity classes:

Wrapping Up

This cheat sheet covers the essentials you need for algorithm analysis. Here is my advice:

1. Memorize the ranking: O(1) < O(log n) < O(n) < O(n log n) < O(n²) < O(2ⁿ) < O(n!) 2. Use the input size table to quickly judge if your solution is fast enough 3. Know the common operations — most of them follow predictable patterns 4. Practice, practice, practice — the more problems you solve, the faster you will recognize complexities by sight

Big O is a tool, not a test. Use it to write better code and make smarter engineering decisions. That is what it is for.

Happy coding!